How to get attribution right in AI Search

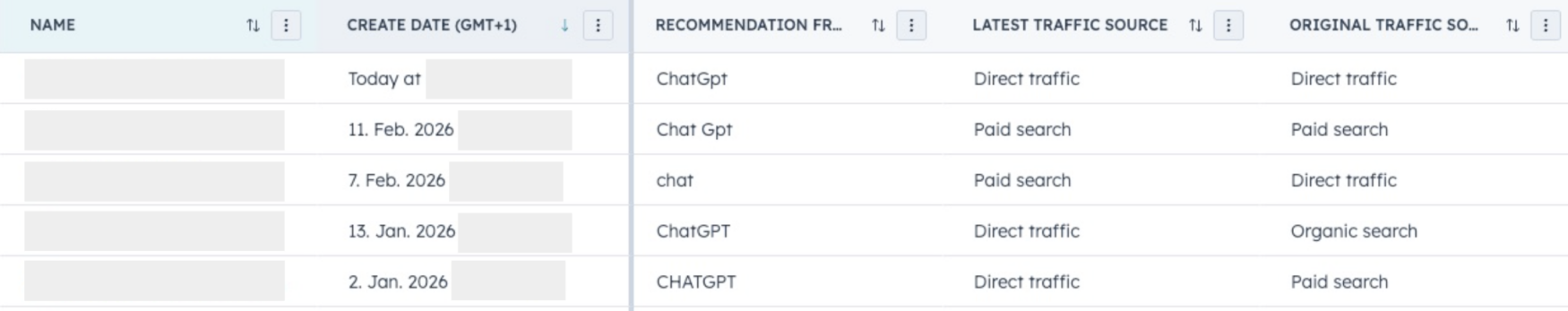

It’s Tuesday, 10am. You check your CRM. Five new leads came in yesterday. HubSpot attributed them to Direct, Paid Search, and Organic Search. But when you asked the leads directly, all five said the same thing: “I found you on ChatGPT.”…

It’s Tuesday, 10am. You check your CRM. Five new leads came in yesterday. HubSpot attributed them to Direct, Paid Search, and Organic Search. But when you asked the leads directly, all five said the same thing: “I found you on ChatGPT.” This is the new attribution reality for B2B companies investing in AI search visibility. Your analytics are blind to the channel that’s increasingly shaping your pipeline, and you’re making budget decisions based on incomplete data. Here’s how Radyant approaches the fix.

Key takeaways

- Standard analytics understate AI search’s contribution to pipeline heavily. Only a tiny fraction of conversions show up as AI referral traffic, while self-reported data across our clients revealed up to 30% of leads actually discovered the brand through AI tools.

- Radyant’s 3-layer attribution model (click-based, self-reported, verbal from sales) can be implemented in under 24 hours and provides the triangulated view needed to make accurate budget decisions.

- Mandatory free-text “How did you hear about us?” fields outperform dropdowns. LLMs now make analyzing thousands of free-text responses trivial, removing the historical objection to this approach.

- AI-attributed leads convert better and close faster. Across Radyant’s B2B SaaS clients, AI-attributed trials convert up to 50% better compared channel average, and external data shows AI search leads close roughly 10 days faster than traditional search leads.

Looking for a shortcut to drive more organic growth from your content, SEO & AI Search efforts? Request a free growth audit from Radyant to get an honest assessment of your organic growth potential.

The attribution blind spot nobody talks about

Here’s the uncomfortable math: Wynter’s data shows that 84% of B2B buyers now use AI for vendor discovery, and 68% start their research in AI tools before they ever touch Google. Forrester confirms 89% of B2B buyers have adopted generative AI as a top source of self-guided information across every phase of buying.

An inside look into the messy attribution reality of AI Search

Yet when those buyers eventually land on your website, your analytics have no idea where they actually came from.

The reason is structural. When someone asks ChatGPT “What’s the best asset management software for mid-size companies?” and gets a recommendation, and then types your brand name into the browser bar, it doesn’t show as ChatGPT. It either auto-completes to your domain (which would be “Direct”) or triggers a Google Search, which leads to a click on your Brand ads (if you’re running some) or your organic ranking of the homepage. So this traffic shows as “Paid Search” or “Organic Search” in your CRM.

Gaetano DiNardi coined a useful term for this: the Dark SEO Funnel. Just as “dark social” described how sharing happened in private channels that analytics couldn’t see, dark SEO describes how discovery happens in AI environments that attribution systems can’t track. As DiNardi puts it: the exploration and narrowing down happens through LLMs, but when it’s time to fact-check and convert, buyers go to Google. And Google gets the attribution credit.

This isn’t a minor analytics quirk. It’s a systematic distortion of your marketing data that leads directly to misallocated budgets. Channels that get the last click get the budget. Channels that actually drive discovery and shortlisting get nothing.

Why this matters more than you think

The attribution blind spot creates three compounding problems:

1. You can’t justify AI search investment. If you’re investing in GEO/AEO strategy and your analytics show zero leads from AI search, the CFO has every reason to question the spend. Without proper attribution, the ROI of your AI search work is invisible by definition.

2. Other channels get credit they didn’t earn. Branded search and direct traffic look like they’re growing organically, when in reality they’re being fed by AI search recommendations. You end up over-investing in channels that are capturing demand, not creating it.

3. Lead quality signals get lost. From our work with Heyflow, AI-attributed trials convert at 14.3% compared to an 11% channel average. StudioHawk’s experiments found AI search leads closed in roughly 18 days, about 10 days faster than traditional search leads. That’s less time educating, fewer objections, and higher confidence earlier in the process. But if you can’t identify these leads as AI-sourced, you can’t see the quality difference, and you can’t allocate accordingly.

6sense research adds another dimension: B2B buyers now control 61% of their journey before any vendor contact, and 95% of the time, the winning vendor is already on the buyer’s shortlist before first contact. If AI search is shaping those shortlists and you can’t see it, you’re flying blind on the most consequential part of the buying journey.

The 3-layer attribution model

At Radyant, we’ve developed a 3-layer attribution model specifically because no single data source captures the full picture of AI search’s impact. Each layer has strengths and blind spots. The insight comes from triangulating across all three.

Layer 1: Click-based attribution (your CRM and analytics)

What it captures: Direct referral traffic from AI platforms where the click and referrer data are preserved. This includes visits from ChatGPT’s paid plans, some Perplexity clicks, and Google AI Overview citations that pass through as organic.

What it misses: Everything else. Free ChatGPT users, Claude, most Gemini sessions, and the entire category of “AI recommended it, I Googled it” behavior. Around 93% of AI Mode searches end without a click, more than twice the zero-click rate of traditional AI Overviews.

How to set it up (30 minutes):

- In GA4, create a custom channel group that captures AI referral sources. Include hostnames for

chat.openai.com,chatgpt.com,perplexity.ai,gemini.google.com,copilot.microsoft.com,claude.ai, andgrok.x.ai. - Create a dedicated segment or audience in GA4 for “AI Search Referrals” so you can track behavior and conversion patterns over time.

- In your CRM (HubSpot, Salesforce), check that there’s already an option for “AI Search” or “AI Referrals” so these leads don’t get bucketed into something like “Other”.

Reality check: Layer 1 is necessary but captures maybe 5-10% of actual AI search influence. AI-referred sessions grew 527% year-over-year (Conductor, November 2025), so the volume is increasing. But even with perfect GA4 setup, you’re seeing a fraction of the real picture. Keep this layer running, but stop treating it as the full story.

Layer 2: Self-reported attribution

What it captures: How leads actually describe their discovery journey, in their own words. This is the single most important layer for understanding AI search’s contribution to pipeline.

What it misses: Leads who skip the field, leads with poor recall, and leads who simplify their multi-touch journey into a single answer.

How to set it up (1 hour):

Add a “How did you hear about us?” field to every conversion form: demo requests, trial signups, contact forms, and gated content. The implementation details matter enormously. Here’s the priority matrix:

Mandatory free-text is the best option. Here’s why: dropdowns force leads to pick from your predefined categories, which means they can’t tell you “ChatGPT recommended you” if “AI search” isn’t in your dropdown. And you’ll always be behind on updating dropdown options as new AI platforms emerge. Free-text lets leads describe their actual experience.

The historical objection to free-text was that it’s impossible to analyze at scale. That objection is dead. With a simple automation (Make.com + OpenAI API, or a similar workflow), you can auto-categorize every free-text response into standardized source categories in your CRM. The workflow takes an afternoon to build: form submission triggers the scenario, the LLM categorizes the response into your taxonomy (AI Search, Organic Search, Referral, Social, etc.), and the categorized value gets written back to a custom CRM field. You get the richness of free-text input with the reportability of structured data.

Databox’s experience validates this approach: after adding a “How did you hear about us?” open-text field to their free trial onboarding, they discovered a 67% increase in people citing AI tools as their discovery path. Without self-reported attribution, they would have missed all of it.

Make it mandatory. Yes, adding a required field to your form will slightly increase friction. In our experience, the drop-off is minimal (1-3% on high-intent forms like demo requests) and the data you gain is worth 10x the lost submissions. A lead who won’t spend 5 seconds typing where they heard about you probably isn’t a high-intent lead.

Layer 3: Verbal attribution from sales

What it captures: What prospects actually say in discovery calls, demos, and sales conversations. This is often the richest and most honest data source because people naturally explain their buying journey when talking to a human.

What it misses: It depends entirely on whether sales teams capture and record this information consistently.

How to set it up (2-3 hours):

- Create a custom CRM field called “Verbal Source (Sales-Reported)” that’s separate from both the marketing attribution field and the self-reported field.

- Make it a required field before a deal can move past the discovery stage.

- Train the sales team to ask a natural version of “What prompted you to reach out?” in every first call. Not as a survey question, but as genuine curiosity during rapport-building.

- If you use conversation intelligence tools (Gong, Chorus, etc.), set up keyword alerts for mentions of “ChatGPT,” “Perplexity,” “AI,” “asked Gemini,” etc. This catches mentions that sales reps might not manually record.

StudioHawk’s experience is instructive here: AI search influence didn’t show up in their SEO reports or AI prompt tracking tools. It showed up in sales calls. “Found you via Grok, actually,” a new lead said. Without the sales layer, that signal would have been lost entirely.

The biggest challenge with Layer 3 isn’t technical, it’s operational. Most of this intel dies in the call because there’s no structured place to put it. The fix is simple: make the CRM field required, make it part of the sales process, and review it in pipeline meetings.

Triangulation in practice: what to do when the layers disagree

The whole point of three layers is that they will often tell different stories about the same lead. Here’s how to read the signals:

Scenario 1: HubSpot says “Direct,” self-reported says “ChatGPT,” sales confirms “AI search.”

This is the most common pattern. Two out of three layers agree on AI search. The click-based layer is wrong because referrer data was stripped. Attribute to AI search with high confidence.

Scenario 2: HubSpot says “Organic Search,” self-reported says “Google,” sales says “They mentioned seeing us in an AI overview first.”

This is the dark SEO funnel in action. The lead’s self-report is technically accurate (they did use Google), but the sales conversation reveals the upstream AI influence. This is a shared attribution case: AI search created awareness, Google search converted it.

Scenario 3: HubSpot says “Paid Search,” self-reported says “A colleague recommended you,” sales says “They saw our ad.”

AI search isn’t involved here. The layers agree on a paid/referral combination. Not every lead is an AI search lead, and the model should confirm that too.

Scenario 4: All three layers disagree.

This happens. When it does, weight Layer 2 (self-reported) and Layer 3 (verbal) more heavily than Layer 1 (click-based). Click-based attribution tells you what happened technically; self-reported and verbal tell you what happened experientially. The experiential data is more useful for understanding how buyers actually discover you.

For board-level reporting, we recommend building a simple triangulated attribution report that shows:

- Click-based source distribution (what your CRM says)

- Self-reported source distribution (what leads say)

- The delta between them (the attribution gap)

- Pipeline and revenue attributed to each source using the triangulated view

The delta is the most important number. If your CRM says 2% of leads come from AI search but self-reported data says 18%, you have a 9x attribution gap. That gap directly translates to misallocated budget.

Want help building your 3-layer attribution setup? Start with a growth strategy audit and we’ll show you exactly where your attribution gaps are.

Where citation tracking tools fit (and where they don’t)

There’s a growing category of AI visibility tools like Peec AI, Profound, and Scrunch AI that monitor how often your brand gets mentioned in AI responses. These are genuinely useful, but they solve a different problem than attribution.

Think of citation tracking as “Layer 0”: visibility monitoring. It tells you how visible you are in AI search results. It does not tell you whether that visibility is driving pipeline.

The distinction matters because AI recommendations are highly inconsistent. SparkToro found there’s less than a 1-in-100 chance that ChatGPT, asked the same question 100 times, will give you the same list of brands in any two responses. This makes point-in-time citation snapshots inherently noisy. Trends over time are more meaningful than any single measurement.

Use citation tracking tools to monitor your AI search visibility and benchmark against competitors. Use the 3-layer attribution model to connect that visibility to actual business outcomes. They’re complementary, not interchangeable.

For our Planeco Building work, we tracked citation share growing from 55% to over 130% (meaning Planeco appeared more frequently than any competitor in AI responses). That visibility metric was meaningful, but what proved the ROI was the 5x increase in organic leads. The citation tracking showed the cause; the attribution model showed the effect.

What changes when you get attribution right

Fixing attribution isn’t a data hygiene exercise. It’s a strategic unlock that changes how you allocate resources.

Budget allocation shifts. When you can see that AI search is actually driving 15-20% of your pipeline (not the 1-2% your traffic data claims), the investment case for GEO/AEO strategy becomes obvious. You stop over-investing in channels that capture demand and start properly funding channels that create it.

Lead quality becomes visible. Across our B2B SaaS clients, once we could identify AI-attributed trials, we saw they converted up to 50% better than channel average. That conversion rate premium changes how you think about the channel entirely. It’s not just driving volume; it’s driving better volume.

Sales cycle intelligence improves. When you know a lead came through AI search, you can adjust the sales approach. These leads tend to arrive more educated and with higher intent. StudioHawk’s data showed a 10-day faster close cycle, which means less time educating and more time closing.

Content strategy gets smarter. When you can trace pipeline back to AI search, you can also start understanding which content topics and formats drive the most AI citations. This creates a feedback loop: better content drives more AI visibility, which drives more attributable leads, which informs what content to create next. This is the connection between AI search visibility strategy and attribution that almost nobody makes.

Board conversations change. Instead of “we think AI search is important but we can’t prove it,” you can show: “18% of our pipeline self-reports AI search as their discovery channel, these leads convert 30% better than average, and they close 10 days faster. Here’s why we’re increasing investment.”

The 24-hour implementation checklist

You don’t need a data engineering project. You need an afternoon.

- Hour 1: Set up AI referral tracking in GA4. Create custom channel group with AI platform hostnames. Create corresponding source values in your CRM.

- Hour 2: Add mandatory free-text “How did you hear about us?” field to your demo request form, trial signup form, and contact form.

- Hour 3: Build the LLM categorization automation. Connect your form tool to Make.com (or n8n), route free-text responses through OpenAI’s API for categorization, and write the result back to a custom CRM field.

- Hour 4: Create the “Verbal Source” CRM field for sales. Brief the sales team on the new field and the question to ask. Make the field required before deals can advance past discovery.

- Ongoing (15 min/week): Review the delta between click-based and self-reported attribution in your weekly pipeline review. Adjust budget allocation quarterly based on triangulated data.

The first week of data will be eye-opening. Based on patterns we’ve seen across clients, expect to find that a huge chunk of your “Direct” traffic has an actual source your analytics couldn’t see, and that AI search is responsible for a meaningful share of it.

Databox for example added a “How did you hear about us?” open-text field to their free trial onboarding and discovered a 67% increase in people citing AI tools as their discovery path.

This isn’t a new problem. It’s a bigger version of the same problem.

If this sounds familiar, it should. The AI search attribution gap is the dark social problem extended. Five years ago, companies that added self-reported attribution discovered that podcasts, Slack communities, and word-of-mouth were driving far more pipeline than their analytics showed. The companies that acted on that insight reallocated budget and grew faster. The companies that didn’t kept optimizing for last-click attribution and wondered why their pipeline felt stuck.

AI search is the same dynamic at 10x scale. 87.4% of all AI referral traffic comes from ChatGPT alone, and that’s just the trackable clicks. The untrackable influence is orders of magnitude larger.

The companies building attribution infrastructure now will have 6-12 months of triangulated data by the time their competitors start asking “should we track this?” That data advantage compounds into better budget decisions, faster iteration on AI search strategy, and a clearer picture of what’s actually driving growth.

The fix takes 24 hours. The cost of not fixing it compounds every quarter you wait.

If you’re investing in AI search visibility but can’t prove the ROI, the problem isn’t the strategy. It’s the measurement. Talk to Radyant about building your attribution setup.

FAQ

Will people actually fill in a mandatory free-text field?

Yes. On high-intent forms (demo requests, trial signups), we consistently see 95%+ completion rates with minimal drop-off. The key is placement and phrasing. Put it near the end of the form, keep the label simple (“How did you hear about us?”), and don’t add helper text that biases the response. People requesting a demo are motivated enough to type 3-5 words about where they found you.

How do I report triangulated attribution data to the board?

Show three columns: click-based attribution, self-reported attribution, and the delta between them. The delta is the story. When the board sees that AI search accounts for 2% of pipeline in the CRM but 18% in self-reported data, the 9x gap makes the case for you. Then show lead quality metrics (conversion rate, sales cycle length) by attributed source to demonstrate that the “invisible” channel is also the highest-quality one.

What if our sales team won’t consistently fill in the verbal attribution field?

Make it a required field before deals can advance past the discovery stage in your CRM. This is the same approach that worked for getting sales to fill in BANT fields, MEDDIC criteria, or any other required data. If it gates pipeline progression, it gets filled in. Reinforce it by reviewing the data in weekly pipeline meetings so the team sees it being used.

Should I use a citation tracking tool like Peec AI or Profound alongside this model?

Yes, but understand what it does and doesn’t solve. Citation tracking tools monitor your visibility in AI search responses, which is valuable for benchmarking and tracking the effectiveness of your GEO/AEO work. They don’t connect visibility to pipeline. Use them as “Layer 0” for monitoring, and use the 3-layer model for attribution. We’ve broken down the differences between Peec AI and Profound if you’re evaluating options.

How long until I have enough data to make budget decisions?

Most B2B SaaS companies with 50+ leads per month will have a statistically meaningful sample within a couple of weeks. You don’t need thousands of data points. If 30 out of 200 leads self-report AI search as their discovery channel over two months, that’s a 15% attribution signal that your CRM was completely missing. That’s enough to inform a budget conversation.

Is AI search attribution different for B2C vs. B2B?

The mechanics are the same, but the stakes differ. In B2B, deal sizes are larger and sales cycles are longer, which means each misattributed lead represents more lost insight. B2B also has the advantage of Layer 3 (verbal attribution from sales calls), which most B2C companies lack. The 3-layer model works for both, but B2B companies get the most value from full implementation because the pipeline impact per lead is higher.

Ready to make organic the channel you can count on?

Run a free audit on your domain or book a 30-minute call with the Radyant team — we'll dive into your category, share what we've seen work in similar situations, and outline a plan if there's a fit.